WebmasterWorld administrator tedster has informed us that Google will be treating subdomains like they treat folders on a site. In short, he said, Matt Cutts said Google will roll out in a few weeks a new filter to make sure only two results of a domain (no matter subdomain or folder) will show up for a search. Here is tedster's exact quote from a WebmasterWorld thread:

News flash from Las Vegas PubCon. Matt Cutts informed us that Google will very soon begin treating subdomains and subdirectories the same in this fashion: there will be only 2 total urls from a domain in any set of search results, so no more getting 3, 4 or however many spots via subdomains. We didn't get any more information than just that basic heads-up.

Of course you can expect exceptions to this rule. For example, blogspot.com sub-domains one would think would fall under this exception to the rule. But overall, if this change happens, it can be a pain in the neck for some SEOs. It will make it a bit harder for one site to "own the search results." Plus it may make some search engine reputation management companies change their strategies.

In an other WebmasterWorld thread tedster gives us a bit more detail on how this may work, bolding for emphasis:

Here's what happens now. The first step of results retrieval for any single search still has no limit on how many urls can be returned from a domain. In the early days of Google, a domain could even have all 10 first page spots and still keep on going. It could even be embarrassing!Today, the preliminary, raw retrieval of roughly 1,000 results still puts no limit on how many urls can be returned from a given domain. But there's a further processing step - a filter kicks in. That filter is supposed to ensure that only 2 urls maximum from any domain will actually be shown.

If those two urls happen to be on the same page, then they will cluster together on that page rather than show at their "true" algorithmically determined position. But through all the total pages of any search result, any single domain is supposed to show up a maximum of 2 times.

Now here's where we've been able to game the current situation. Subdomains are treated like a separate domain, and so you can get two results for www.example.com, two more for sub1.example.com, two more for sub2.example.com, and so on.

Matt Cutts mentioned that Google is working on code to eliminate that possibility for most domains. That is, Google plans to treat most subdomains essentially like any other url on the main domain, and they will limit that domain, INCLUDING all its subdomains, to two positions total on any given search.

At that point, the whole subdomain vs. subdirectory decision will lose most of its importance - and your wwww urls will not show up, even though they may still be causing you trouble behind the scenes.

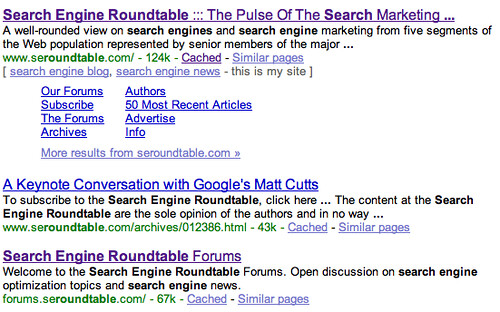

For a practical example, here is a search on search engine roundtable, our site, that shows the top three listings from this domain:

The top two listings are from the www.seroundtable.com and the third listing is from the subdomain, forums.seroundtable.com. If Google makes this change, I will loose the second www result or loose the forum result. For relevancy, does this matter much?

Honestly, with the introduction of Sitelinks on this particular site and for this particular search, no it won't impact relevancy, because the searcher can use those Sitelinks. But for sites that do not have Sitelinks, it may make a big difference to the searcher.

Forum discussion at WebmasterWorld.

Update: See update to this post over here.