A week or so ago, Google launched the Google Image Search redesign. With that, they left out an important feature, the ability to report offensive images.

Yesterday, I spotted a thread at Google Web Search Help with someone who complained that a SafeSearch didn't filter out an image. I was about to tell the guy to use the "report offensive image" feature but then I looked for the feature and it was gone.

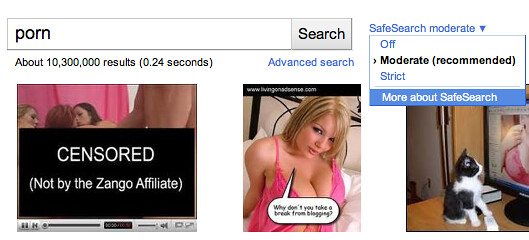

What I see now:

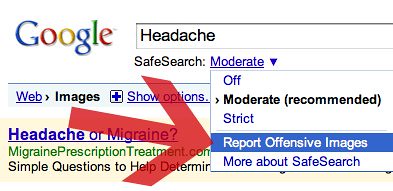

What I saw in the old design:

As pointed out in the thread, the feature rarely worked anyway and the better way to remove offensive images was to use the web page removal tool linked to from the SafeSearch page. But that to me is more confusing than the old way.

Will Google bring it back?

Forum discussion at Google Web Search Help.

Google sent me a response, which seems to imply that they forgot and will add something in the near future:

We're still using the SafeSearch filter, set to "moderate" by default, to filter pornographic or offensive images from the search results.Most of our SafeSearch filtering is done automatically -- user reports just supplement that filter. While no filter is 100 percent accurate, we're continuing to update SafeSearch to keep it as current and comprehensive as possible.

To your question -- in the new UI we didn't include the old reporting link; we're working on a new way for users to report offensive images directly from the new image results grid. We don’t have a specific timeframe for that, but in the meantime, our webpage removal request tool is available as always to report offensive content that SafeSearch may have missed.